A Magic Trick, Performed By Computer, On Me

On the difference between finding a pattern and finding the dictionary. The trick behind every Voynich decipherment, every AI hallucination, and every face in a coffee stain.

Every eighteen months or so, somebody cracks the Voynich Manuscript.

You have probably seen one of these headlines without really registering it, the way you see headlines about new longest-living tortoises or sightings of the Loch Ness monster: a kind of ambient cultural furniture that passes across the field of your attention without leaving much of a dent. A researcher at some university announces that the most famous undeciphered text in Western history has finally given up its secrets. The university puts out a press release. The press release gets picked up by the wire services. For about three days, the cracked-it story competes with whatever else is going on that week, and then it vanishes, and then eighteen months later someone else cracks it again.

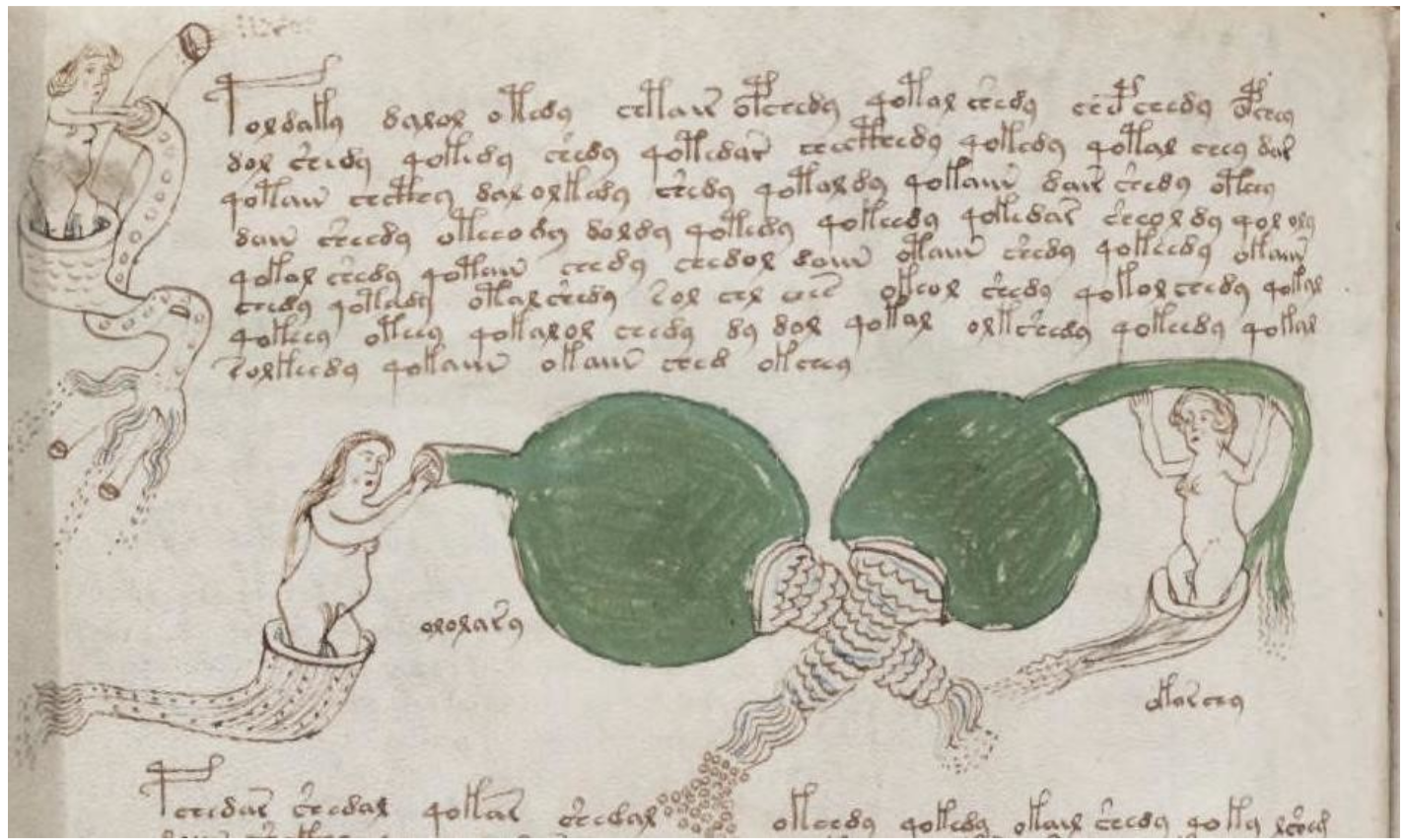

Before going further, it is probably worth pausing on what the thing actually is, because "the most famous undeciphered text in Western history" is the kind of phrase that lets a text stay famous without anyone really knowing what it looks like. The Voynich Manuscript is a 240-page illustrated codex, carbon-dated to the early 1400s, written in a script that matches nothing in any known language and densely illustrated with botanical drawings of plants, astronomical diagrams, and pages of small nude women bathing in elaborate green plumbing. The Polish-American book dealer Wilfrid Voynich bought it from a Jesuit college outside Rome in 1912, and the manuscript has carried his name since. It has lived at Yale's Beinecke Rare Book and Manuscript Library since 1969, and you can page through the entire thing online for free. It looks like nothing else. I have even taken my own swing at cracking it.1

I want to tell you why this cycle keeps happening. Not the specific reasons any given attempt falls apart (those tend to be idiosyncratic), but the deeper recurring reason, the reason the same thing keeps happening on the same schedule for over a century. It is, I think, genuinely interesting. It turns out to have almost nothing to do with the Voynich Manuscript specifically, and almost everything to do with a particular kind of magic trick that the human mind, and increasingly our most sophisticated software, is susceptible to in a way that I believe we are only just beginning to reckon with.

I am going to try to show you the trick. I am going to perform it on you, in fact. Or, more precisely, I am going to tell you about the time I performed it on myself, caught myself in the act, and then spent a while figuring out why nobody else seemed to be catching it.

Here is what happened.

I had written some software that did something very specific: it took a piece of Latin text, ran it through a made-up code (like the kind children invent, where every letter gets replaced by a different letter), and then ran it back through the reverse of the same code, so that what came out was the original Latin again. Perfectly intact. I then checked the output against a dictionary to confirm it was real Latin. You would expect this check to come back with a very high match rate, because what I was checking was, after all, actual Latin.

The experiment, the part that made it an experiment, was that I checked the Latin against a dictionary of Hebrew.

I should pause on why this is funny. Latin and Hebrew are not relatives. They belong to different language families (Indo-European and Semitic, respectively, which are about as distant as language families get), they use different alphabets, they handle vowels differently, they conjugate differently, and apart from a handful of religious loanwords they share essentially no vocabulary. Hebrew is what you would deliberately pick if you wanted a clean negative control for a Latin dictionary, the linguistic equivalent of testing your bathroom scale by weighing an empty room. The expected match rate, on any normal account of what a dictionary is supposed to do, is essentially zero.

The dictionary said almost one in five words matched.

The number had the quality of a typo. I ran the script again, slowly, the way you re-check a thermometer when the reading does not match the room. The number stayed where it was.

I ran this result through five different statistical methods, each of them respected, most of them standard tools in scientific fields where getting this kind of thing right actually matters (medical research, genetics, signal processing). Every single one of them agreed. The rawest count said 19% match. The most sophisticated method, the one scientists use to cross-check results in gene-sequencing labs, said 18%. Even the method specifically designed to filter out random background noise claimed 9.5% of the matches were real.

Five different expert tools. All of them looking at correctly-decoded Latin. All of them nodding along with the Hebrew dictionary.

This is going to require some explaining.

How The Trick Works

The mechanism is almost embarrassingly simple. Embarrassingly simple is, I have come to believe, the natural state of most genuinely good magic tricks: the difficulty of magic is rarely in the cleverness of the mechanism and almost always in the directorial work of preventing the audience from looking at the right place at the right moment. Persi Diaconis, the Stanford statistician who spent his early career as a professional magician (he left home at fourteen to travel with the legendary card-handler Dai Vernon, returning to school for math at twenty-four) before deciding probability theory was on balance the more interesting of the two disciplines, has written about how magic and statistics share this exact problem: you are trying to manage where the attention goes, because the attention is the thing that decides whether the audience notices what is actually happening.2

Here is where the attention is being managed.

Imagine you are driving behind a car whose license plate reads ESCAPE. You assume immediately that the driver paid extra for the plate; you do not assume coincidence. You do not assume the DMV happened to issue a string of six random letters that landed exactly on a real English word. This is reasonable. Random six-letter sequences do not, as a rule, spell things. The fact that this one does means somebody chose it.

This intuition is so natural it does not feel like an intuition. It feels like how reality works.

The intuition breaks down when the words get short.

Most of the "words" that computer algorithms produce when they try to decode mystery scripts are short. Not ESCAPE short. Two, three, four letters. And a big dictionary, it turns out, contains approximately all possible short strings. Every two-letter combination you could make is in there somewhere. Most three-letter combinations too. A 200,000-word dictionary is less a reference book and more a finely-meshed statistical fishnet: almost anything short you throw at it will stick.3

(Every dictionary, incidentally, contains the word "dictionary.")

So when a decipherment algorithm produces a bunch of three-letter fragments and checks them against a big dictionary, it reports a huge match rate. This looks like success. The same number would come back if you fed the dictionary literal random gibberish of the same length, because the dictionary is catching essentially everything.

I confirmed this later by running the same correctly-decoded Latin against dictionaries of Italian, Spanish, German, Russian, and Chinese. The Latin-script dictionaries (Italian, Spanish, German) all hit at non-trivial rates, with Italian's noise floor alone running close to forty percent, because they share an alphabet and a lot of character-frequency DNA with Latin. The non-Latin-script dictionaries (Russian, Chinese) hit at essentially zero, save for a small ASCII-loanword fraction baked into the source files. Hebrew, despite being in its own script, still produced about an 18 percent apparent match rate for the same reason at larger volume: enough Latin-character loanwords and proper names had survived inside its dictionary file to chance-collide with short fragments of decoded Latin. The dictionary's nationality, it turns out, is not what is doing the work. The character distribution is.

What the match rate measures, when you take it apart, is something much closer to: how many short words are in your output. Which, as a measure of whether you have deciphered an ancient script, is approximately as informative as measuring how many letters are in your output.

The Trick Caught In The Wild

The most legible recent case is the 2019 University of Bristol claim that the Voynich Manuscript was written in something called "proto-Romance."

The brief version: a researcher at a respected British university published a paper in a peer-reviewed journal claiming to have deciphered the manuscript. The university issued a press release. Mainstream press picked it up. The author offered translations of selected words from selected pages, and the translations had the just-plausible-enough quality that made you want to believe them.

Within days, the medieval-studies community took it apart. Lisa Fagin Davis, the Executive Director of the Medieval Academy of America, walked through the paper publicly and methodically. The translations worked because they had been chosen to work. The methodology, when applied systematically to whole pages instead of cherry-picked phrases, fell apart. "Proto-Romance," it turned out, was not a recognized linguistic construct.

I want to be careful here, because it would be cheap and untrue to suggest the researcher was being dishonest. He genuinely saw the patterns he reported. The patterns were, in a narrow technical sense, there. The problem was that with enough symbols to choose from, enough candidate languages available, and enough flexibility about what counted as a match, you could demonstrate that kind of pattern for almost anything. The search was too broad. The target was too small. The metric counted hits without ever asking how many hits the noise would have produced on its own.

The Voynich Manuscript has been "deciphered" at least twenty-five times since it was rediscovered in 1912.4 It has been identified as Hebrew, as Ukrainian, as Manchu, as early Turkish, as a coded form of Latin, as the boyhood notebook of Leonardo da Vinci, and in at least one memorable instance as a document in a dialect of Nahuatl written by a 16th-century Mesoamerican healer who had somehow come into possession of medieval European vellum.

The most committed of the failures may have been William Romaine Newbold, who held the Adam Seybert chair in moral and intellectual philosophy at the University of Pennsylvania, and who in April 1921 stood up at a joint meeting of the American Philosophical Society and the College of Physicians of Philadelphia to announce that the manuscript was the encoded laboratory notebook of Roger Bacon and that Bacon had, several centuries ahead of schedule, observed both biological cells and the spiral structure of the Andromeda Nebula. The encoding, in Newbold's account, worked by reading microscopic shorthand markings inside each visible character, which made the manuscript a sort of medieval microfiche. The translations Newbold produced were, in places, fluent colloquial English of the 1920s, including phrases that would have been mildly anachronistic in the mouth of any 13th-century friar. He never addressed this. He died in 1926. John Matthews Manly, a Chaucer scholar at the University of Chicago, spent the next five years gently demonstrating, in print, that the microscopic shorthand markings were cracks left by ink drying on rough vellum.

None of these solutions has ever been independently verified. At some point the regularity starts to look like data. What this cycle reliably produces, on its eighteen-month rhythm, is the experience of decipherment, which is a different product entirely from a decipherment, and which the pattern-matching machinery of the human mind keeps generating no matter how many previous attempts have failed.

Breaking The Illusion

The fix is, with a symmetry I find slightly suspect, also small. It is to ask the question the trick specifically depends on you not asking: if I ran my analysis on text that I knew contained nothing, how many matches would I get?

Pause on this, because the simplicity is doing a lot of work. The whole system of dictionary-matching-as-success-metric rests on the unspoken assumption that matches happen because the decoding is correct. The fix is to test that assumption directly. Generate fake text that looks statistically like your decoded output but contains no actual meaning, check it against the same dictionary, and count the matches. That number is your "noise floor": what you would get from nothing. Any real result below that number is worse than nothing. Any real result equal to that number is nothing wearing a costume.

This is not a new idea. Every field that has dealt with this kind of problem has independently converged on some version of it. Medicine has placebo controls. Statistics has the null distribution. Signal processing has the noise floor. Genetics has something called a BLAST E-value, which is basically this exact idea adapted for gene sequences. The version I proposed for decipherment is, conceptually, a relatively modest adaptation of well-known infrastructure from older fields.

What I added was one extra accounting step, and it turns out to be the step that matters.

The standard version of the fix asks, for each word in your decoded output: does this word show up more in the real data than it would in random noise? If yes, count it as real signal.

My version asks the same thing, and then also asks the flipped version: are there words that show up only in the random noise, and never in the real data, that still hit the dictionary? If yes, treat those as anti-signal. Subtract them.

The reason this matters, the reason it caught the Hebrew-on-Latin absurdity when the other methods did not, is that the other methods were looking at each word in isolation. They could see words that were common in the real text. They could not see the gap where words were missing in the real text but showing up in the noise. That gap is diagnostic. It tells you that random character noise, at your particular character frequencies, is capable of producing dictionary matches entirely on its own. The noise is doing work it should not be able to do. The size of that gap is the size of the trick.

The entire correction, the full apparatus that catches what five standard methods miss, boils down to about five lines of Python and one equation.

The whole paper reduces to one equation. Given a dictionary D and a decoded token stream with empirical character distribution p(·) and token-length distribution π(·), the noise floor is:

For every word in the dictionary, multiply together the character frequencies of the decoded output, weight by how often the decoded corpus contains tokens of that length, and sum. That number is how many of your tokens would match by accident. Everything above it is signal; everything below it is noise a naive evaluator would mistake for signal.

The Larger Magic

Here is the thing I keep circling back to, the part that started as a footnote in my own thinking and has, in the reading and writing since, become the part I most want to talk about. I am going to make a larger point now, and I want to flag that I am making it, because making a larger point after a technical walkthrough is the kind of move that can easily drift into gesturing-at-bigger-things. You should feel free to be slightly skeptical over the next few paragraphs. I am being slightly skeptical of me.

Underneath the lookup tables and the percent signs, the dictionary collision effect is a much older problem, one humans have been walking into for as long as we have had brains complex enough to find patterns at all. In 1958, a German psychiatrist named Klaus Conrad coined a word for it: apophenia, which means roughly "the unmotivated seeing of connections." Conrad was studying the early stages of schizophrenia, and what he noticed in his patients had less to do with hallucinations than with a strange relationship to real things. They saw meaningful patterns in objects that were there but did not actually mean anything. The crack in the wall was a real crack. The patient simply believed it had been put there as a sign.

Apophenia is everywhere once you have a name for it. A five-year-old insists the cloud over the parking lot is a dragon. A woman in Florida finds the face of the Virgin Mary in a grilled cheese sandwich and, having found her, sells the sandwich on eBay for twenty-eight thousand dollars.5 A friend of a friend tells anyone who will listen that a specific song keeps appearing at meaningful moments in her life. The brain is a pattern-recognition machine. It cannot be reasoned with on the question of whether a given pattern is real, because from the brain's own perspective, the pattern being real is what finding it feels like.

The dictionary collision effect is the same phenomenon in code form. The dictionary does not want to find a match the way a brain wants to find a face in a coffee stain. But the structural conditions are identical: a large search space, a small target, and a metric that counts hits without ever asking how many hits the search would have produced from noise.

In AI research, this kind of failure has a technical name: hallucination. The word refers to a model output that looks and sounds like a correct answer without being one. The machinery that produces a hallucination is the same machinery that produces correct answers; in a hallucination, that machinery has locked onto a pattern which happens not to be true. The model has no way to tell which it has done.

A 2025 NIH paper, "Enhancing Substance Use Detection in Clinical Notes with Large Language Models", caught one version of this in the wild: frontier language models were making confident inferences the underlying text did not support, reading "injection drug use" in a chart and concluding heroin specifically, reading a prescription-drug mention and concluding illicit use.6 The models were doing exactly what they were built to do, which is pattern-matching. Drug mentions and the words around them appear together so often in training data that the words around them had effectively become a proxy for the drugs themselves. A second 2025 paper, "When Bias Pretends to Be Truth", demonstrated that the standard tools for detecting hallucinations miss this entire class of mistake.7 The reason runs deep into the architecture. The model's confidence reflects how strong the pattern is. It says nothing about whether the pattern is true. When the wrong pattern is strong, the model is confident, and the confidence-based detector waves it through.

The worst version of the news is that this cannot be engineered away. A 2024 paper by Xu, Jain, and Kankanhalli, "Hallucination is Inevitable: An Innate Limitation of Large Language Models", proved the result formally: for any language model of fixed size, a minimum volume of confident wrong answers is mathematically required, in the same sense that certain problems in computing are provably undecidable rather than merely unsolved. OpenAI's own researchers conceded the point in a September 2025 paper, and then argued, fairly, that most of the hallucination rate in current systems comes from a softer source than the proof: standard benchmarks reward confident guessing and penalize "I don't know," which trains models to guess confidently on questions they should have abstained on. So there is good news and bad news. The theoretical floor is real, which is the bad news. The distance between where current models sit and where they could theoretically sit is also real, and probably large, and probably partly closeable, which is the good news. Nobody knows how big that gap is. What is not in dispute is that the floor exists, and that every language model now sitting inside a hospital chart or a legal brief or a news feed is producing some fraction of its outputs under a mathematical guarantee: confident in tone, wrong in fact, and undetectable to the machinery that made them.

The pattern-matcher had found a pattern. The pattern was wrong. The pattern-matcher could not tell.

This is the same trick. Performed in a different theater, on a much larger stage, by systems now embedded in clinical workflows and legal research and journalistic verification and basically every other domain where someone has decided automated pattern-matching is good enough.

The framework I built is one tiny correction in one narrow domain.8 But the underlying lesson generalizes. Any time a pattern-finder operates against a large reference, you need an explicit account of what the pattern-finder would have found in the absence of signal. Without that account, you cannot tell discovery apart from confabulation. The pattern-finder cannot tell them apart either, because from the inside, finding a real pattern and finding a chance pattern feel exactly the same.

This is true of dictionary-matching pipelines.

It is true of AI hallucinations.

It is true of human brains looking at coffee stains and seeing the face of someone they have lost.

It is, I suspect, true of considerably more than that, and I am going to leave that claim where it lies, because saying more about it would require me to have answers I do not have, and the one thing this whole essay has been about is noticing the conditions under which confident answers get generated out of nothing in particular and then believed.

Including, you understand, this one.

Footnotes

-

A shorthand hypothesis I sketched out some time before writing this essay, available at mattruckman.com/blog/voynich_shorthand_hypothesis. I am linking it partly out of honesty (it would be off to write an essay about decipherment overconfidence without disclosing my own prior involvement) and partly because the piece is, in retrospect, a fairly clean specimen of exactly the kind of thinking the rest of this essay is about to argue against. ↩

-

The closest intellectual ancestor of what I am describing in this essay is Diaconis and Mosteller's 1989 paper "Methods for Studying Coincidences," in the Journal of the American Statistical Association, which formalizes what they call the law of truly large numbers: with a sample large enough, any sufficiently rare thing happens. The dictionary collision effect is one small lexical-space instance of the same idea. If you read one paper after this essay, that would be the one. ↩

-

I ran the numbers. At two-letter words, a medium-sized dictionary matches essentially every possible combination. At three letters, it is still catching around 90% of random strings. Somewhere past six letters it finally starts letting things through. This is the kind of finding that, once you know it, retroactively compromises your ability to read any decipherment paper that reports a "percentage match" without specifying how long the matched words are. There is no recovering from this knowledge. I am sorry about it. ↩

-

I counted. ↩

-

That actually happened. Diane Duyser, of Hollywood, Florida, sold the sandwich in November 2004 to GoldenPalace.com, an online casino with a side business in buying weird internet artifacts. The sandwich had spent the previous decade in a clear plastic case on Duyser's nightstand, and, by all accounts, never molded. ↩

-

"Enhancing Substance Use Detection in Clinical Notes with Large Language Models" (2025). The error analysis in that paper finds that the most common failure mode is "insufficient evidence, where the model made assumptions unsupported by the text," with concrete examples including "inferring heroin use from injection drug use" and assumptions of illicit use from prescription-drug mentions. ↩

-

"When Bias Pretends to Be Truth: How Spurious Correlations Undermine Hallucination Detection in LLMs," arXiv:2511.07318 (2025). The paper's claim, in its own words: spurious correlations "induce hallucinations that are confidently generated, immune to model scaling, evade current detection methods, and persist even after refusal fine-tuning." Confidence-based and inner-state probing methods both fail because the wrong answer presents to the detector exactly the way a right answer would. ↩

-

If you want the framework, it is on PyPI as

dictcollision. The full paper, with the math worked out and the validation experiments laid out in deep-dive form, lives at mattruckman.com/papers/dictionary-collision-effect; the code is at github.com/mruckman1/signal-isolation-paper. The code reproduces in under ten minutes on a laptop, which is the sort of detail I am including because I would genuinely like someone to find a bug. Finding bugs is, for reasons I cannot fully articulate, one of the higher forms of intellectual generosity available in the current era. ↩